Here’s a round-up of a few articles I’ve written about assessing Computing and ICT over the years. Although some of them were written a while ago, I believe they are still useful and relevant.

Here’s a different and more engaging way of testing pupils’ knowledge, skills and understanding. This is an updated version of an article I wrote in 2020. This version includes some ChatGPT-generated additions.

I have serious misgivings about the use of AI to assess students’ work.

If AI generates an essay, and another AI grades it, has anything useful actually happened?

Trying to be helpful to pupils while assessing their understanding could actually be counter-productive.

The attractive thing about badges is that a school can invent their own categories and achievement levels.

Rubrics look like an easy way to tackle assessment. But they can be deceptive in that respect, and can cause the unwary to slip up.

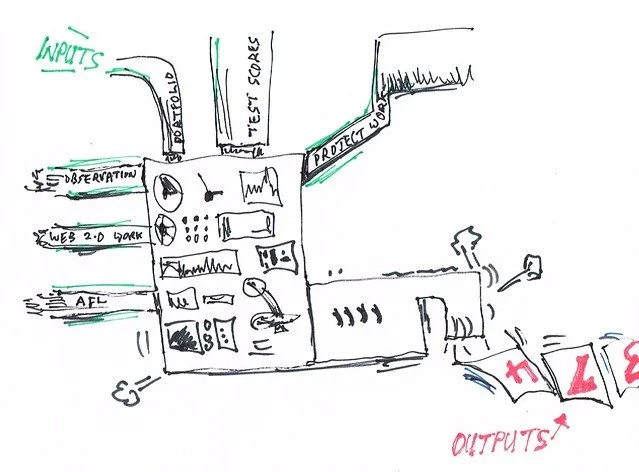

Spotting the unexpected results of mock exams of scores or even hundreds of students need no longer be a nightmare.

I have to say I think it is really insulting to have someone who looks like he has just finished studying for ‘A’ Levels himself telling us why exams are best.

The only thing worse than feeling tired but knowing you have to mark 30 books by tomorrow morning is that feeling of ennui at 5 o-clock on a grim Sunday evening when all you want to do is curl up with a mug of tea and watch a movie, but having those exercise books smirking back at you.

A new assessment resource has come to my attention. It shows the keywords and synonyms in the SAMR and Bloom’s Taxonomy models, and apps which enable the teacher to address those areas.

Why bother with theories of assessment? Surely all that matters is whether or not it works?

What’s wrong with teacher-assessed grades?

Failing is empowering.